diff --git a/contents/blog/product-engineer-tools.md b/contents/blog/product-engineer-tools.md

index cc574b97ac2d..2d382b2cac3b 100644

--- a/contents/blog/product-engineer-tools.md

+++ b/contents/blog/product-engineer-tools.md

@@ -77,7 +77,7 @@ A trend popular among product engineers is bringing the code framework and infra

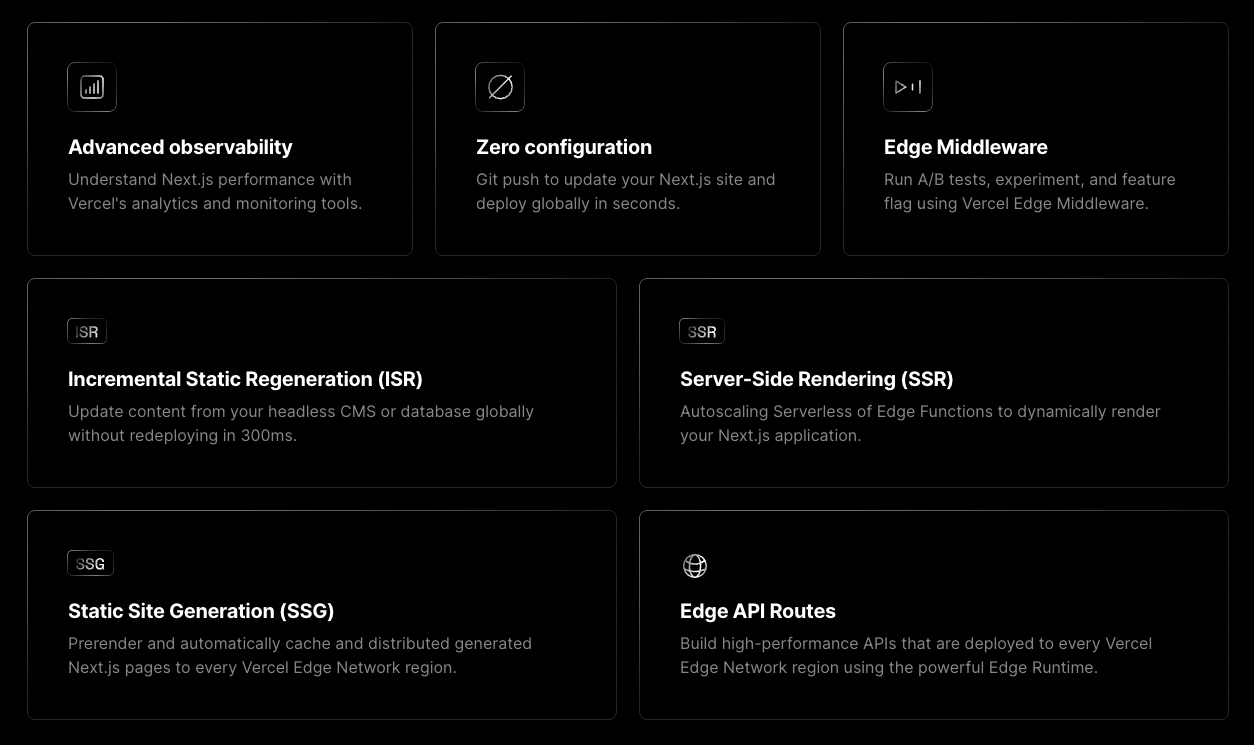

The benefits of integrating infrastructure and code from Vercel and Next.js.

-Other examples of these code and infrastructure combos include [Supabase](https://supabase.com/), [PlanetScale](https://planetscale.com/), and [Hasura](/customers/hasura). They abstract away infrastructure work while providing speed and tailored offerings. Software engineers and large teams might find their limited customization and options constraining, but product engineers love them.

+Other examples of these code and infrastructure combos include [Supabase](/tutorials/supabase-query), [PlanetScale](https://planetscale.com/), and [Hasura](/customers/hasura). They abstract away infrastructure work while providing speed and tailored offerings. Software engineers and large teams might find their limited customization and options constraining, but product engineers love them.

## Tools for owning bugs and incidents

diff --git a/contents/docs/data-warehouse/setup/postgres.md b/contents/docs/data-warehouse/setup/postgres.md

index de1c3d24f603..11d88fcb4d2f 100644

--- a/contents/docs/data-warehouse/setup/postgres.md

+++ b/contents/docs/data-warehouse/setup/postgres.md

@@ -25,6 +25,8 @@ To link Postgres:

The data warehouse then starts syncing your Postgres data. You can see details and progress in the [data warehouse settings tab](https://us.posthog.com/data-warehouse/settings).

+> **Looking for an example of the Postgres source?** Check out our tutorial where we [connect and query Supabase data](/tutorials/supabase-query).

+

#### Inbound IP addresses

We use a set of IP addresses to access your Postgres instance. To ensure this connector works, add these IPs to your inbound security rules to enable the data import:

diff --git a/contents/docs/data-warehouse/tutorials.mdx b/contents/docs/data-warehouse/tutorials.mdx

index 84bca2e0ee8e..203414d7100b 100644

--- a/contents/docs/data-warehouse/tutorials.mdx

+++ b/contents/docs/data-warehouse/tutorials.mdx

@@ -9,4 +9,5 @@ Got a question which isn't answered below? Head to [the community forum](/questi

- [How to set up Stripe reports](/tutorials/stripe-reports)

- [How to set up Hubspot reports](/tutorials/hubspot-reports)

- [How to set up Zendesk reports](/tutorials/zendesk-reports)

-- [How to set up Google Ads reports](/tutorials/google-ads-reports)

\ No newline at end of file

+- [How to set up Google Ads reports](/tutorials/google-ads-reports)

+- [How to query Supabase in PostHog](/tutorials/supabase-query)

\ No newline at end of file

diff --git a/contents/tutorials/nextjs-supabase-signup-funnel.mdx b/contents/tutorials/nextjs-supabase-signup-funnel.mdx

index 057017aeedee..0350911c1288 100644

--- a/contents/tutorials/nextjs-supabase-signup-funnel.mdx

+++ b/contents/tutorials/nextjs-supabase-signup-funnel.mdx

@@ -16,7 +16,7 @@ _Estimated reading time: 20 minutes_ ☕☕☕

With the number of software frameworks and services available to help with developer productivity and feature building, it's never been a better time to be a software developer. One of most important things you'll have to build, whether building a SaaS, developer tool, or consumer app, is a sign up flow that begins on a landing page and ideally results in a successful sign up. The purpose of this sign up flow is to get as many real users through to being successfully signed up on your app or platform. So, it's important that you can measure whether your sign up flow is converting and where any potential sign ups are dropping out of that funnel.

-In this tutorial, we'll create a simple sign up flow in a Next.js app, starting with a Supabase example for authentication. We'll then look at how you can instrument that sign up flow using the [PostHog JavaScript SDK](/docs/integrate/client/js) and create a sign up [funnel visualization](/docs/user-guides/funnels) within PostHog to analyze the success - or failure - of the sign up flow.

+In this tutorial, we'll create a simple sign up flow in a Next.js app, starting with a [Supabase](/tutorials/supabase-query) example for authentication. We'll then look at how you can instrument that sign up flow using the [PostHog JavaScript SDK](/docs/integrate/client/js) and create a sign up [funnel visualization](/docs/user-guides/funnels) within PostHog to analyze the success - or failure - of the sign up flow.

## Before you begin

diff --git a/contents/tutorials/supabase-query.md b/contents/tutorials/supabase-query.md

new file mode 100644

index 000000000000..0a74ed9f7cc7

--- /dev/null

+++ b/contents/tutorials/supabase-query.md

@@ -0,0 +1,166 @@

+---

+title: How to query Supabase data in PostHog

+date: 2024-08-01

+author:

+ - ian-vanagas

+tags:

+ - data warehouse

+---

+

+Combining your database and analytics data is a powerful way to understand your users. [Supabase](https://supabase.com/) is a popular choice for handling that app data, as it provides a database, auth, storage, and more all-in-one.

+

+Because Supabase is built on Postgres, we can link and query it in PostHog using our data warehouse. This tutorial shows you how to do that and provides some example insights you can create with the data afterward.

+

+## Linking Supabase data to PostHog

+

+To start, you need both a Supabase and PostHog account. Once you have those, head to PostHog's [data warehouse tab](https://us.posthog.com/data-warehouse) and:

+

+1. Click **Link source.** (or, if you've already added a source, **Manage sources** then **+ New source**)

+2. Choose the **Postgres** option by clicking **Link.**

+3. Go to your Supabase project settings and under configuration, click **Database.**

+4. Fill out the PostHog data warehouse link fields with your Supabase data.

+ 1. Under connection parameters, copy host, database name, port, and user (database name in Supabase is just `database` in PostHog)

+

+

+

+ 2. Enter the password you set for your database.

+ 3. Choose the schema you want to import. See the Supabase table editor for details on what each one has. `public` is the default, but we also use `auth` and `extensions` later in this tutorial.

+5. Once you add the details, click **Next**.

+

+

+

+6. Choose set up the sync methods, choose the tables you want to include, and click **Import** to start the sync.

+

+Once it completes, you can then query the data in PostHog.

+

+## Querying Supabase data in PostHog

+

+You can query your Supabase data using SQL insights. This enables you to combine it with your PostHog usage data.

+

+### Visualizing the count of objects over time

+

+You can use trends to visualize your data. For example, to visualize a count of objects over time:

+

+1. Create a [new insight](https://us.posthog.com/insights/new).

+2. As a series, search for `postgres` and select your table. For us, that is `postgres_newsletters`.

+3. Ensure your ID, distinct ID, and timestamp fields are correct and press **Select.**

+

+

+

+This creates a trend of the count of the objects in your table over time. You can then modify it using filters, visualization options, and breakdowns. For example, to break down by `user_id`, click **Add breakdown**, select the **Data warehouse properties** tab, and then choose `user_id`. To visualize this nicely, you can change the line chart to a total value bar chart.

+

+

+

+### Combined user overview

+

+Linking your Supabase `user` table (under the `auth` schema) enables you to get an overview of user data across both sources. This does require you to add a table prefix like `supabase_` if you already have a Postgres source linked.

+

+To create this overview, create a new SQL insight that:

+

+- Gets `email`, `last_sign_in_at`, `created_at` from Supabase's `user` table

+- A count of events from PostHog's `event` table

+- Join the tables using `email` and `distinct_id`

+

+Altogether, this looks like this:

+

+```sql

+with sb_users as (

+ select email, last_sign_in_at, created_at from supabase_postgres_users

+),

+big_events as (

+ select count(*) as event_count, distinct_id

+ from events

+ group by distinct_id

+)

+select email, last_sign_in_at, created_at, event_count

+from sb_users

+left join big_events on big_events.distinct_id = sb_users.email

+```

+

+We could also PostHog person properties or a Supabase table to these by joining more tables to this query.

+

+> **Tip:** You can also set up a join between PostHog's `persons` table and your Supabase `users` table. Go to the data warehouse tab, click the three dots next to the `persons` source, and click **Add join**. This enables you to use Supabase `users` data wherever you can use persons.

+>

+

+### Usage by paid users

+

+Similar to the last query, we can get a list of paid users from Supabase by filtering for users with a `paid` column (or equivalent) set to true. We can then use this list to analyze their PostHog usage.

+

+```sql

+with sb_users as (

+ select email, last_sign_in_at, created_at

+ from supabase_postgres_users

+ where paid = true

+),

+big_events as (

+ select count(*) as event_count, distinct_id

+ from events

+ group by distinct_id

+)

+select email, last_sign_in_at, created_at, event_count

+from sb_users

+left join big_events on big_events.distinct_id = sb_users.email

+```

+

+If your payment details were on another table, you could also join that table.

+

+### Querying observability stats

+

+Supabase also captures observability data we can query if you link the `pg_state_statements` table from the `extensions` schema.

+

+An example of a query you could get from this is p95 total execution time along with the median rows read:

+

+```sql

+SELECT

+ quantile(0.95)(total_exec_time) AS p95_exec_time,

+ median(rows) AS median_rows_read

+FROM sb_stats_postgres_pg_stat_statements

+```

+

+Another example is the most time-consuming queries:

+

+```sql

+SELECT

+ query,

+ total_exec_time,

+ calls,

+ rows

+FROM sb_stats_postgres_pg_stat_statements

+ORDER BY total_exec_time DESC

+LIMIT 10

+```

+

+One more for good luck, this gets queries with high variability:

+

+```sql

+SELECT

+ query,

+ stddev_exec_time,

+ mean_exec_time,

+ calls

+FROM sb_stats_postgres_pg_stat_statements

+WHERE calls > 10 -- Filter out queries with few calls

+ORDER BY stddev_exec_time / mean_exec_time DESC

+LIMIT 20

+```

+

+## Further reading

+

+- [How to set up Stripe reports](/tutorials/stripe-reports)

+- [The basics of SQL for analytics](/product-engineers/sql-for-analytics)

+- [How to set up Google Ads reports](/tutorials/google-ads-reports)