diff --git a/.github/workflows/ml.yml b/.github/workflows/ml.yml

index d6d4c03c..4f1585ea 100644

--- a/.github/workflows/ml.yml

+++ b/.github/workflows/ml.yml

@@ -26,4 +26,4 @@ jobs:

cd machine-learning-client

- name: Run tests

run: |

- pipenv run pytest

+ pipenv run pytest ./*.py

diff --git a/Pipfile b/Pipfile

index a9752772..cb0286be 100644

--- a/Pipfile

+++ b/Pipfile

@@ -18,6 +18,7 @@ numpy = "*"

pillow = "*"

jinja2 = "*"

coverage = "*"

+pytest-cov = "*"

[dev-packages]

diff --git a/Pipfile.lock b/Pipfile.lock

index 7269fff5..a3274a13 100644

--- a/Pipfile.lock

+++ b/Pipfile.lock

@@ -1,7 +1,7 @@

{

"_meta": {

"hash": {

- "sha256": "244b79d7965ddd508e80e68529e3ad9e79192b6cdc5fb56e0d710ee75cda1d94"

+ "sha256": "8c115d91118c7c5797ccd73b331d9c6e7b1b8024efb7b1478dd9a5092b274f46"

},

"pipfile-spec": 6,

"requires": {

@@ -197,6 +197,9 @@

"version": "==8.1.7"

},

"coverage": {

+ "extras": [

+ "toml"

+ ],

"hashes": [

"sha256:00838a35b882694afda09f85e469c96367daa3f3f2b097d846a7216993d37f4c",

"sha256:0513b9508b93da4e1716744ef6ebc507aff016ba115ffe8ecff744d1322a7b63",

@@ -1035,6 +1038,15 @@

"markers": "python_version >= '3.8'",

"version": "==8.1.1"

},

+ "pytest-cov": {

+ "hashes": [

+ "sha256:4f0764a1219df53214206bf1feea4633c3b558a2925c8b59f144f682861ce652",

+ "sha256:5837b58e9f6ebd335b0f8060eecce69b662415b16dc503883a02f45dfeb14857"

+ ],

+ "index": "pypi",

+ "markers": "python_version >= '3.8'",

+ "version": "==5.0.0"

+ },

"python-dateutil": {

"hashes": [

"sha256:37dd54208da7e1cd875388217d5e00ebd4179249f90fb72437e91a35459a0ad3",

diff --git a/machine-learning-client/Pipfile b/machine-learning-client/Pipfile

index 4939cb2a..9cf9bc01 100644

--- a/machine-learning-client/Pipfile

+++ b/machine-learning-client/Pipfile

@@ -13,7 +13,7 @@ black = "*"

pytest = "*"

coverage = "*"

pillow = "*"

-

+mongomock = "*"

[dev-packages]

diff --git a/machine-learning-client/Pipfile.lock b/machine-learning-client/Pipfile.lock

index aa729f3a..98aec783 100644

--- a/machine-learning-client/Pipfile.lock

+++ b/machine-learning-client/Pipfile.lock

@@ -1,7 +1,7 @@

{

"_meta": {

"hash": {

- "sha256": "7ffe4ab5cec4d71618177b60d89a6a400796a1e9a19d35e430e3b6edd59ccc47"

+ "sha256": "2f999c5ac293b09d5103e8f3e9a119f425cdd74921c853ab75bd6cb7182401d4"

},

"pipfile-spec": 6,

"requires": {

@@ -611,6 +611,14 @@

"markers": "python_version >= '3.9'",

"version": "==0.3.2"

},

+ "mongomock": {

+ "hashes": [

+ "sha256:08a24938a05c80c69b6b8b19a09888d38d8c6e7328547f94d46cadb7f47209f2",

+ "sha256:f06cd62afb8ae3ef63ba31349abd220a657ef0dd4f0243a29587c5213f931b7d"

+ ],

+ "index": "pypi",

+ "version": "==4.1.2"

+ },

"mtcnn": {

"hashes": [

"sha256:d0957274584be62cb83d4a089041f8ee3cf3b1893e45f01ed3356f94a381302b",

@@ -1075,6 +1083,12 @@

"markers": "python_full_version >= '3.7.0'",

"version": "==13.7.1"

},

+ "sentinels": {

+ "hashes": [

+ "sha256:7be0704d7fe1925e397e92d18669ace2f619c92b5d4eb21a89f31e026f9ff4b1"

+ ],

+ "version": "==1.0.0"

+ },

"setuptools": {

"hashes": [

"sha256:6c1fccdac05a97e598fb0ae3bbed5904ccb317337a51139dcd51453611bbb987",

diff --git a/machine-learning-client/client.py b/machine-learning-client/client.py

index 7a0b724d..8e6cef8c 100644

--- a/machine-learning-client/client.py

+++ b/machine-learning-client/client.py

@@ -6,7 +6,6 @@

Pymongo: connect to MongoDB

Deepface: to detect emotions in images

Numpy: numerical operations

-...

"""

import base64

from PIL import Image

@@ -16,10 +15,11 @@

import pymongo

import numpy as np

+

def get_emotion(image):

"""

Method for detecting emotions in an image containing humans,

- using the deepface library. Works with images containing multiple faces.

+ using the deepface library. Works with images containing multiple faces, returns sentiment for majority.

"""

try:

bin_data = base64.b64decode(image)

@@ -29,8 +29,11 @@ def get_emotion(image):

emotions = obj[0]['emotion']

return emotions

except Exception as e:

- return f"ERROR: Couldn't detect a face for emotion. {e}"

+ return f"ERROR: {e}"

+def run_connection(option):

+ "arranged for utility"

+ connect_db(option)

def connect_db(option):

"""

@@ -45,6 +48,8 @@ def connect_db(option):

pass

x = temp.find_one()

emotion_message = get_emotion(x["photo"])

+ if not emotion_message:

+ return "No emotions found"

if temp.find_one():

temp.update_one(

{

@@ -56,11 +61,8 @@ def connect_db(option):

}

},

)

- #print("REACHED HERE!")

time.sleep(1)

client.close()

-

if __name__ == "__main__":

- #im = cv2.imread('./test0.png')

- connect_db(True)

+ run_connection(True)

diff --git a/machine-learning-client/myenv/README.md b/machine-learning-client/myenv/README.md

deleted file mode 100644

index 79abe99c..00000000

--- a/machine-learning-client/myenv/README.md

+++ /dev/null

@@ -1,383 +0,0 @@

-# deepface

-

-

-

-[](https://pepy.tech/project/deepface)

-[](https://anaconda.org/conda-forge/deepface)

-[](https://github.com/serengil/deepface/stargazers)

-[](https://github.com/serengil/deepface/blob/master/LICENSE)

-[](https://github.com/serengil/deepface/actions/workflows/tests.yml)

-

-[](https://sefiks.com)

-[](https://www.youtube.com/@sefiks?sub_confirmation=1)

-[](https://twitter.com/intent/user?screen_name=serengil)

-[](https://www.patreon.com/serengil?repo=deepface)

-[](https://github.com/sponsors/serengil)

-

-[](https://doi.org/10.1109/ASYU50717.2020.9259802)

-[](https://doi.org/10.1109/ICEET53442.2021.9659697)

-

-

-

-

-

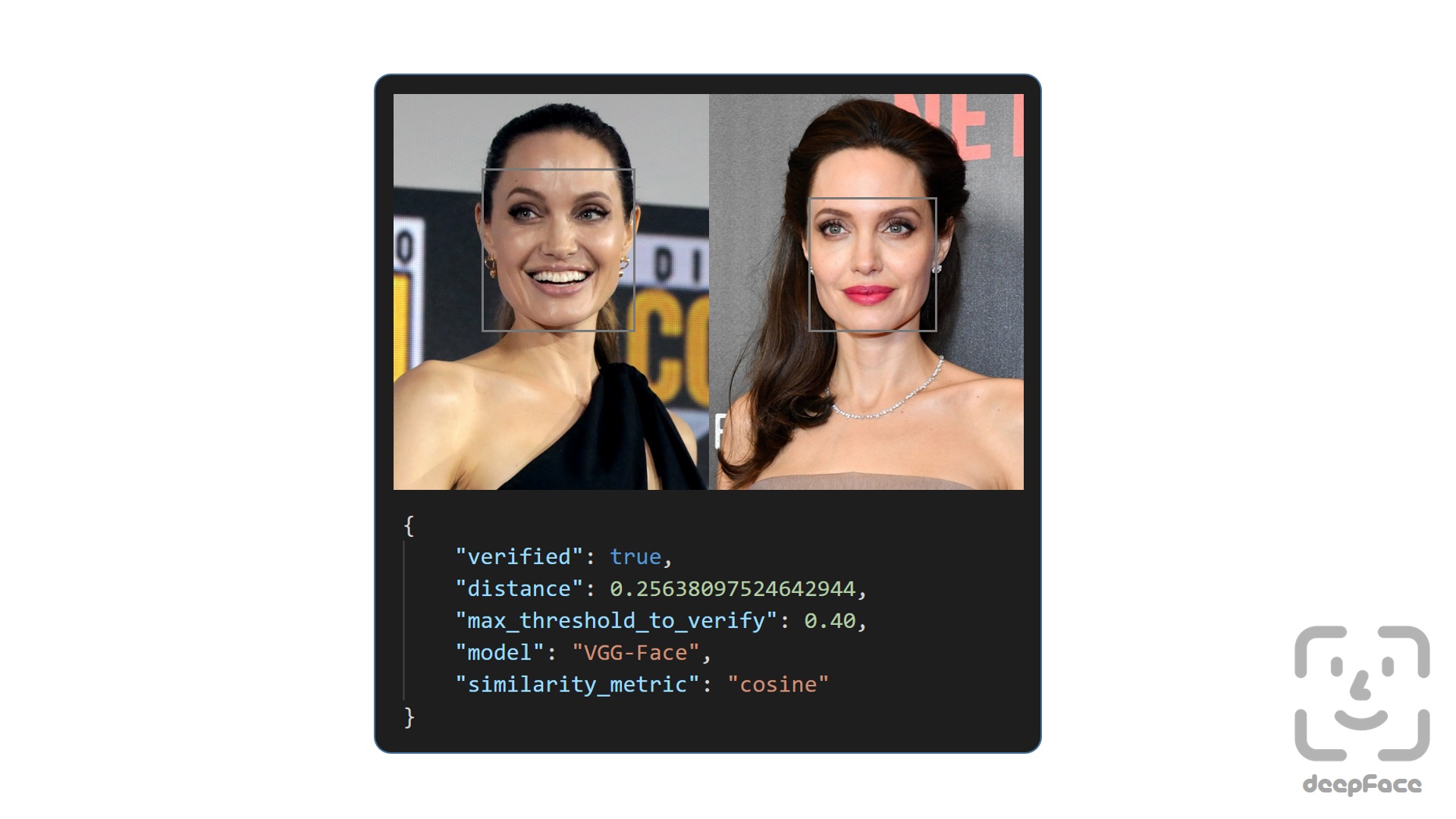

-Deepface is a lightweight [face recognition](https://sefiks.com/2018/08/06/deep-face-recognition-with-keras/) and facial attribute analysis ([age](https://sefiks.com/2019/02/13/apparent-age-and-gender-prediction-in-keras/), [gender](https://sefiks.com/2019/02/13/apparent-age-and-gender-prediction-in-keras/), [emotion](https://sefiks.com/2018/01/01/facial-expression-recognition-with-keras/) and [race](https://sefiks.com/2019/11/11/race-and-ethnicity-prediction-in-keras/)) framework for python. It is a hybrid face recognition framework wrapping **state-of-the-art** models: [`VGG-Face`](https://sefiks.com/2018/08/06/deep-face-recognition-with-keras/), [`Google FaceNet`](https://sefiks.com/2018/09/03/face-recognition-with-facenet-in-keras/), [`OpenFace`](https://sefiks.com/2019/07/21/face-recognition-with-openface-in-keras/), [`Facebook DeepFace`](https://sefiks.com/2020/02/17/face-recognition-with-facebook-deepface-in-keras/), [`DeepID`](https://sefiks.com/2020/06/16/face-recognition-with-deepid-in-keras/), [`ArcFace`](https://sefiks.com/2020/12/14/deep-face-recognition-with-arcface-in-keras-and-python/), [`Dlib`](https://sefiks.com/2020/07/11/face-recognition-with-dlib-in-python/), `SFace` and `GhostFaceNet`.

-

-Experiments show that human beings have 97.53% accuracy on facial recognition tasks whereas those models already reached and passed that accuracy level.

-

-## Installation [](https://pypi.org/project/deepface/) [](https://anaconda.org/conda-forge/deepface)

-

-The easiest way to install deepface is to download it from [`PyPI`](https://pypi.org/project/deepface/). It's going to install the library itself and its prerequisites as well.

-

-```shell

-$ pip install deepface

-```

-

-Secondly, DeepFace is also available at [`Conda`](https://anaconda.org/conda-forge/deepface). You can alternatively install the package via conda.

-

-```shell

-$ conda install -c conda-forge deepface

-```

-

-Thirdly, you can install deepface from its source code.

-

-```shell

-$ git clone https://github.com/serengil/deepface.git

-$ cd deepface

-$ pip install -e .

-```

-

-Then you will be able to import the library and use its functionalities.

-

-```python

-from deepface import DeepFace

-```

-

-**Facial Recognition** - [`Demo`](https://youtu.be/WnUVYQP4h44)

-

-A modern [**face recognition pipeline**](https://sefiks.com/2020/05/01/a-gentle-introduction-to-face-recognition-in-deep-learning/) consists of 5 common stages: [detect](https://sefiks.com/2020/08/25/deep-face-detection-with-opencv-in-python/), [align](https://sefiks.com/2020/02/23/face-alignment-for-face-recognition-in-python-within-opencv/), [normalize](https://sefiks.com/2020/11/20/facial-landmarks-for-face-recognition-with-dlib/), [represent](https://sefiks.com/2018/08/06/deep-face-recognition-with-keras/) and [verify](https://sefiks.com/2020/05/22/fine-tuning-the-threshold-in-face-recognition/). While Deepface handles all these common stages in the background, you don’t need to acquire in-depth knowledge about all the processes behind it. You can just call its verification, find or analysis function with a single line of code.

-

-**Face Verification** - [`Demo`](https://youtu.be/KRCvkNCOphE)

-

-This function verifies face pairs as same person or different persons. It expects exact image paths as inputs. Passing numpy or base64 encoded images is also welcome. Then, it is going to return a dictionary and you should check just its verified key.

-

-```python

-result = DeepFace.verify(img1_path = "img1.jpg", img2_path = "img2.jpg")

-```

-

-

-

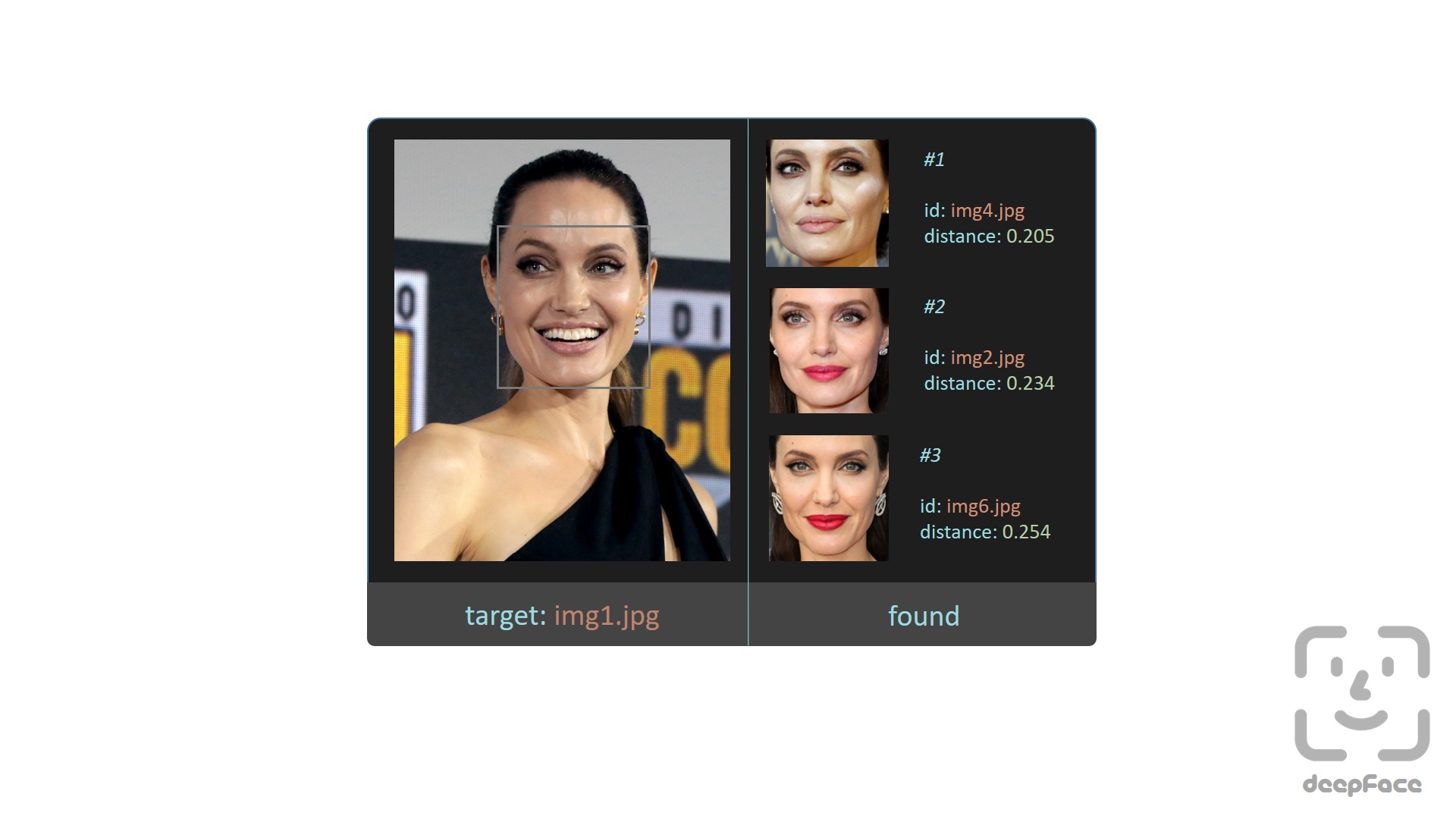

-**Face recognition** - [`Demo`](https://youtu.be/Hrjp-EStM_s)

-

-[Face recognition](https://sefiks.com/2020/05/25/large-scale-face-recognition-for-deep-learning/) requires applying face verification many times. Herein, deepface has an out-of-the-box find function to handle this action. It's going to look for the identity of input image in the database path and it will return list of pandas data frame as output. Meanwhile, facial embeddings of the facial database are stored in a pickle file to be searched faster in next time. Result is going to be the size of faces appearing in the source image. Besides, target images in the database can have many faces as well.

-

-

-```python

-dfs = DeepFace.find(img_path = "img1.jpg", db_path = "C:/workspace/my_db")

-```

-

-

-

-**Embeddings**

-

-Face recognition models basically represent facial images as multi-dimensional vectors. Sometimes, you need those embedding vectors directly. DeepFace comes with a dedicated representation function. Represent function returns a list of embeddings. Result is going to be the size of faces appearing in the image path.

-

-```python

-embedding_objs = DeepFace.represent(img_path = "img.jpg")

-```

-

-This function returns an array as embedding. The size of the embedding array would be different based on the model name. For instance, VGG-Face is the default model and it represents facial images as 4096 dimensional vectors.

-

-```python

-embedding = embedding_objs[0]["embedding"]

-assert isinstance(embedding, list)

-assert model_name = "VGG-Face" and len(embedding) == 4096

-```

-

-Here, embedding is also [plotted](https://sefiks.com/2020/05/01/a-gentle-introduction-to-face-recognition-in-deep-learning/) with 4096 slots horizontally. Each slot is corresponding to a dimension value in the embedding vector and dimension value is explained in the colorbar on the right. Similar to 2D barcodes, vertical dimension stores no information in the illustration.

-

-

-

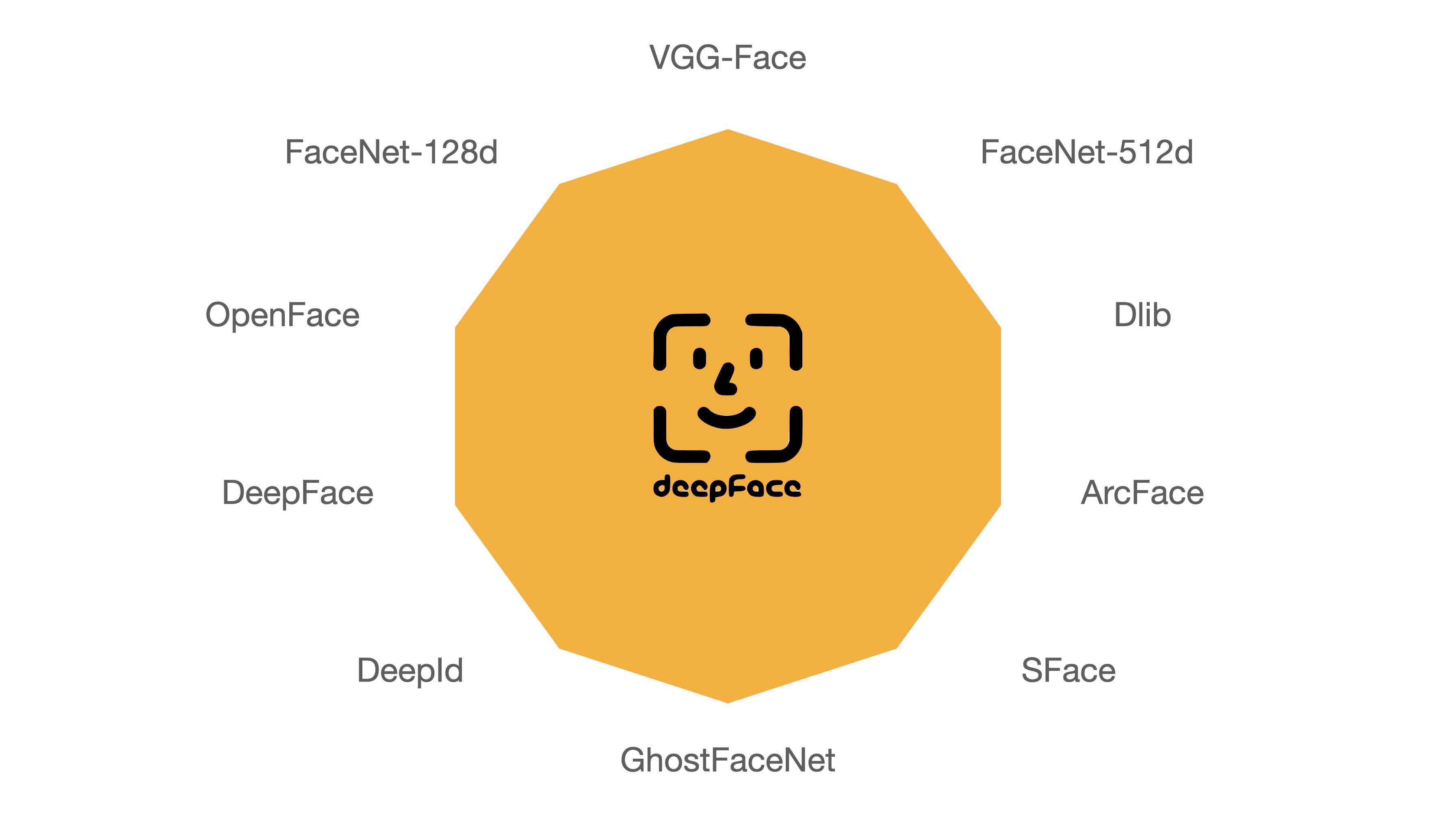

-**Face recognition models** - [`Demo`](https://youtu.be/i_MOwvhbLdI)

-

-Deepface is a **hybrid** face recognition package. It currently wraps many **state-of-the-art** face recognition models: [`VGG-Face`](https://sefiks.com/2018/08/06/deep-face-recognition-with-keras/) , [`Google FaceNet`](https://sefiks.com/2018/09/03/face-recognition-with-facenet-in-keras/), [`OpenFace`](https://sefiks.com/2019/07/21/face-recognition-with-openface-in-keras/), [`Facebook DeepFace`](https://sefiks.com/2020/02/17/face-recognition-with-facebook-deepface-in-keras/), [`DeepID`](https://sefiks.com/2020/06/16/face-recognition-with-deepid-in-keras/), [`ArcFace`](https://sefiks.com/2020/12/14/deep-face-recognition-with-arcface-in-keras-and-python/), [`Dlib`](https://sefiks.com/2020/07/11/face-recognition-with-dlib-in-python/), `SFace` and `GhostFaceNet`. The default configuration uses VGG-Face model.

-

-```python

-models = [

- "VGG-Face",

- "Facenet",

- "Facenet512",

- "OpenFace",

- "DeepFace",

- "DeepID",

- "ArcFace",

- "Dlib",

- "SFace",

- "GhostFaceNet",

-]

-

-#face verification

-result = DeepFace.verify(img1_path = "img1.jpg",

- img2_path = "img2.jpg",

- model_name = models[0]

-)

-

-#face recognition

-dfs = DeepFace.find(img_path = "img1.jpg",

- db_path = "C:/workspace/my_db",

- model_name = models[1]

-)

-

-#embeddings

-embedding_objs = DeepFace.represent(img_path = "img.jpg",

- model_name = models[2]

-)

-```

-

-

-

-FaceNet, VGG-Face, ArcFace and Dlib are [overperforming](https://youtu.be/i_MOwvhbLdI) ones based on experiments. You can find out the scores of those models below on [Labeled Faces in the Wild](https://sefiks.com/2020/08/27/labeled-faces-in-the-wild-for-face-recognition/) set declared by its creators.

-

-| Model | Declared LFW Score |

-| -------------- | ------------------ |

-| VGG-Face | 98.9% |

-| Facenet | 99.2% |

-| Facenet512 | 99.6% |

-| OpenFace | 92.9% |

-| DeepID | 97.4% |

-| Dlib | 99.3 % |

-| SFace | 99.5% |

-| ArcFace | 99.5% |

-| GhostFaceNet | 99.7% |

-| *Human-beings* | *97.5%* |

-

-Conducting experiments with those models within DeepFace may reveal disparities compared to the original studies, owing to the adoption of distinct detection or normalization techniques. Furthermore, some models have been released solely with their backbones, lacking pre-trained weights. Thus, we are utilizing their re-implementations instead of the original pre-trained weights.

-

-**Similarity**

-

-Face recognition models are regular [convolutional neural networks](https://sefiks.com/2018/03/23/convolutional-autoencoder-clustering-images-with-neural-networks/) and they are responsible to represent faces as vectors. We expect that a face pair of same person should be [more similar](https://sefiks.com/2020/05/22/fine-tuning-the-threshold-in-face-recognition/) than a face pair of different persons.

-

-Similarity could be calculated by different metrics such as [Cosine Similarity](https://sefiks.com/2018/08/13/cosine-similarity-in-machine-learning/), Euclidean Distance and L2 form. The default configuration uses cosine similarity.

-

-```python

-metrics = ["cosine", "euclidean", "euclidean_l2"]

-

-#face verification

-result = DeepFace.verify(img1_path = "img1.jpg",

- img2_path = "img2.jpg",

- distance_metric = metrics[1]

-)

-

-#face recognition

-dfs = DeepFace.find(img_path = "img1.jpg",

- db_path = "C:/workspace/my_db",

- distance_metric = metrics[2]

-)

-```

-

-Euclidean L2 form [seems](https://youtu.be/i_MOwvhbLdI) to be more stable than cosine and regular Euclidean distance based on experiments.

-

-**Facial Attribute Analysis** - [`Demo`](https://youtu.be/GT2UeN85BdA)

-

-Deepface also comes with a strong facial attribute analysis module including [`age`](https://sefiks.com/2019/02/13/apparent-age-and-gender-prediction-in-keras/), [`gender`](https://sefiks.com/2019/02/13/apparent-age-and-gender-prediction-in-keras/), [`facial expression`](https://sefiks.com/2018/01/01/facial-expression-recognition-with-keras/) (including angry, fear, neutral, sad, disgust, happy and surprise) and [`race`](https://sefiks.com/2019/11/11/race-and-ethnicity-prediction-in-keras/) (including asian, white, middle eastern, indian, latino and black) predictions. Result is going to be the size of faces appearing in the source image.

-

-```python

-objs = DeepFace.analyze(img_path = "img4.jpg",

- actions = ['age', 'gender', 'race', 'emotion']

-)

-```

-

-

-

-Age model got ± 4.65 MAE; gender model got 97.44% accuracy, 96.29% precision and 95.05% recall as mentioned in its [tutorial](https://sefiks.com/2019/02/13/apparent-age-and-gender-prediction-in-keras/).

-

-

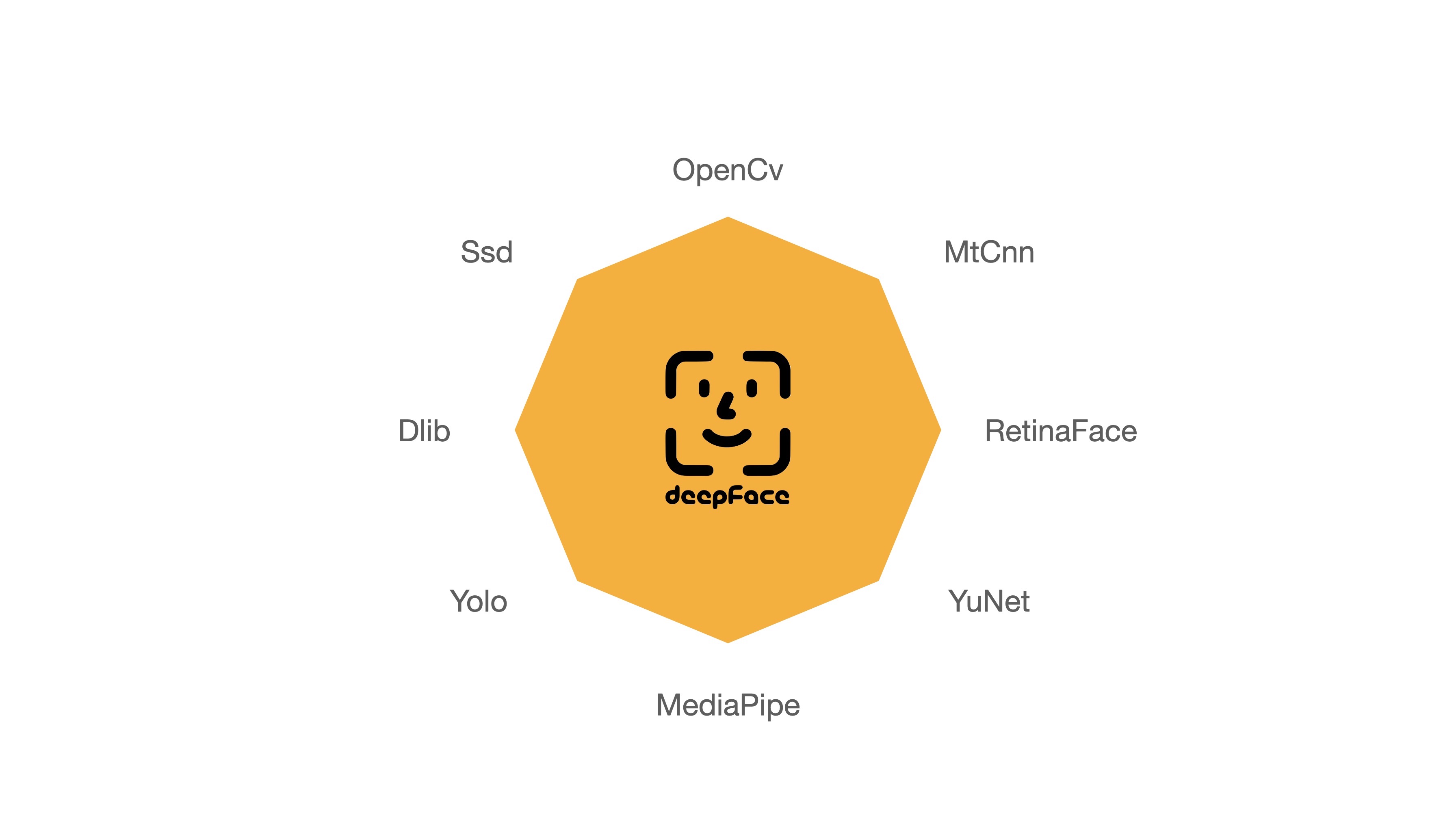

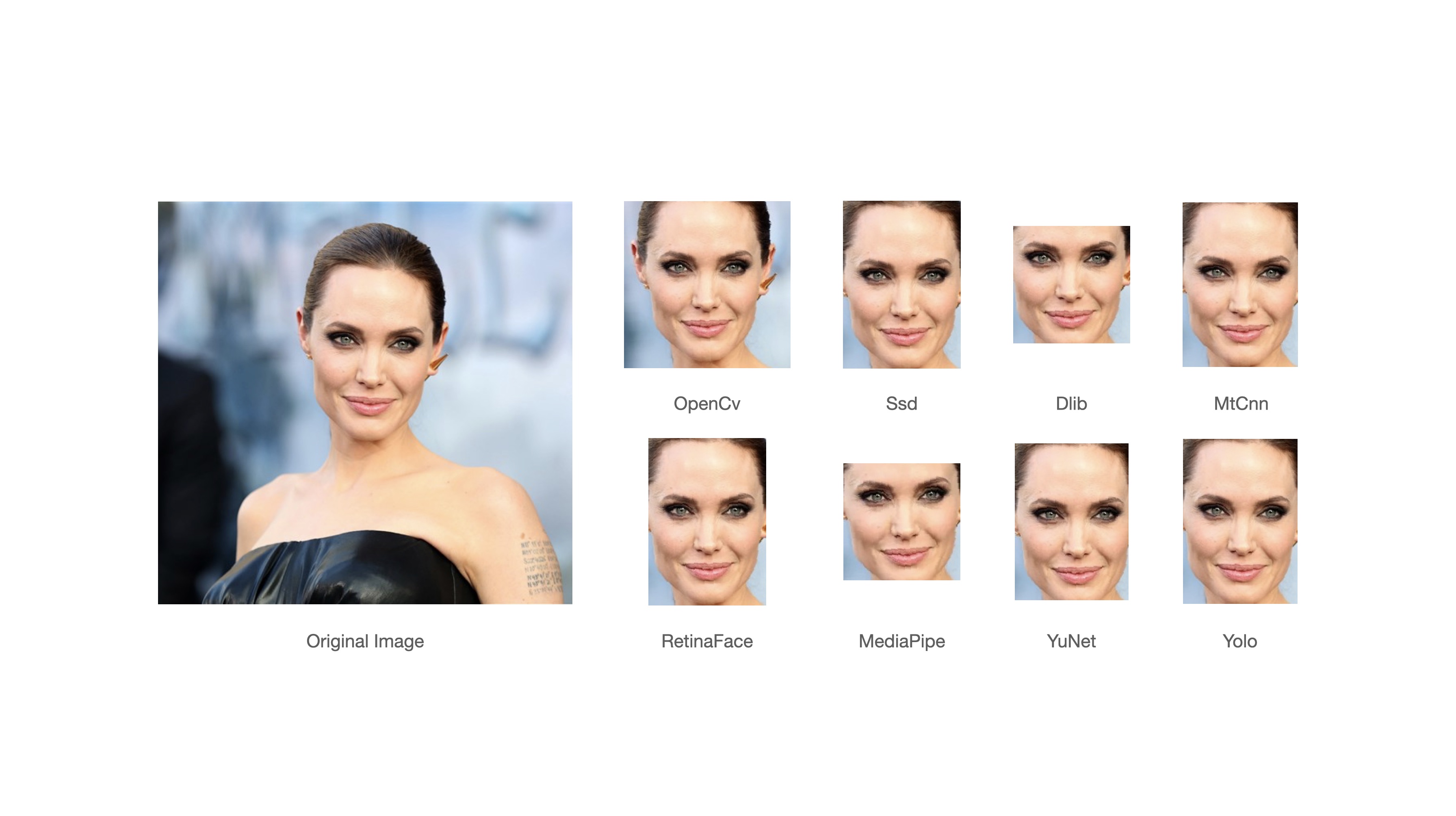

-**Face Detectors** - [`Demo`](https://youtu.be/GZ2p2hj2H5k)

-

-Face detection and alignment are important early stages of a modern face recognition pipeline. Experiments show that just alignment increases the face recognition accuracy almost 1%. [`OpenCV`](https://sefiks.com/2020/02/23/face-alignment-for-face-recognition-in-python-within-opencv/), [`SSD`](https://sefiks.com/2020/08/25/deep-face-detection-with-opencv-in-python/), [`Dlib`](https://sefiks.com/2020/07/11/face-recognition-with-dlib-in-python/), [`MTCNN`](https://sefiks.com/2020/09/09/deep-face-detection-with-mtcnn-in-python/), [`Faster MTCNN`](https://github.com/timesler/facenet-pytorch), [`RetinaFace`](https://sefiks.com/2021/04/27/deep-face-detection-with-retinaface-in-python/), [`MediaPipe`](https://sefiks.com/2022/01/14/deep-face-detection-with-mediapipe/), [`YOLOv8 Face`](https://github.com/derronqi/yolov8-face) and [`YuNet`](https://github.com/ShiqiYu/libfacedetection) detectors are wrapped in deepface.

-

-

-

-All deepface functions accept an optional detector backend input argument. You can switch among those detectors with this argument. OpenCV is the default detector.

-

-```python

-backends = [

- 'opencv',

- 'ssd',

- 'dlib',

- 'mtcnn',

- 'retinaface',

- 'mediapipe',

- 'yolov8',

- 'yunet',

- 'fastmtcnn',

-]

-

-#face verification

-obj = DeepFace.verify(img1_path = "img1.jpg",

- img2_path = "img2.jpg",

- detector_backend = backends[0]

-)

-

-#face recognition

-dfs = DeepFace.find(img_path = "img.jpg",

- db_path = "my_db",

- detector_backend = backends[1]

-)

-

-#embeddings

-embedding_objs = DeepFace.represent(img_path = "img.jpg",

- detector_backend = backends[2]

-)

-

-#facial analysis

-demographies = DeepFace.analyze(img_path = "img4.jpg",

- detector_backend = backends[3]

-)

-

-#face detection and alignment

-face_objs = DeepFace.extract_faces(img_path = "img.jpg",

- target_size = (224, 224),

- detector_backend = backends[4]

-)

-```

-

-Face recognition models are actually CNN models and they expect standard sized inputs. So, resizing is required before representation. To avoid deformation, deepface adds black padding pixels according to the target size argument after detection and alignment.

-

-

-

-[RetinaFace](https://sefiks.com/2021/04/27/deep-face-detection-with-retinaface-in-python/) and [MTCNN](https://sefiks.com/2020/09/09/deep-face-detection-with-mtcnn-in-python/) seem to overperform in detection and alignment stages but they are much slower. If the speed of your pipeline is more important, then you should use opencv or ssd. On the other hand, if you consider the accuracy, then you should use retinaface or mtcnn.

-

-The performance of RetinaFace is very satisfactory even in the crowd as seen in the following illustration. Besides, it comes with an incredible facial landmark detection performance. Highlighted red points show some facial landmarks such as eyes, nose and mouth. That's why, alignment score of RetinaFace is high as well.

-

- -

-

The Yellow Angels - Fenerbahce Women's Volleyball Team

-

-

-You can find out more about RetinaFace on this [repo](https://github.com/serengil/retinaface).

-

-**Real Time Analysis** - [`Demo`](https://youtu.be/-c9sSJcx6wI)

-

-You can run deepface for real time videos as well. Stream function will access your webcam and apply both face recognition and facial attribute analysis. The function starts to analyze a frame if it can focus a face sequentially 5 frames. Then, it shows results 5 seconds.

-

-```python

-DeepFace.stream(db_path = "C:/User/Sefik/Desktop/database")

-```

-

-

-

-Even though face recognition is based on one-shot learning, you can use multiple face pictures of a person as well. You should rearrange your directory structure as illustrated below.

-

-```bash

-user

-├── database

-│ ├── Alice

-│ │ ├── Alice1.jpg

-│ │ ├── Alice2.jpg

-│ ├── Bob

-│ │ ├── Bob.jpg

-```

-

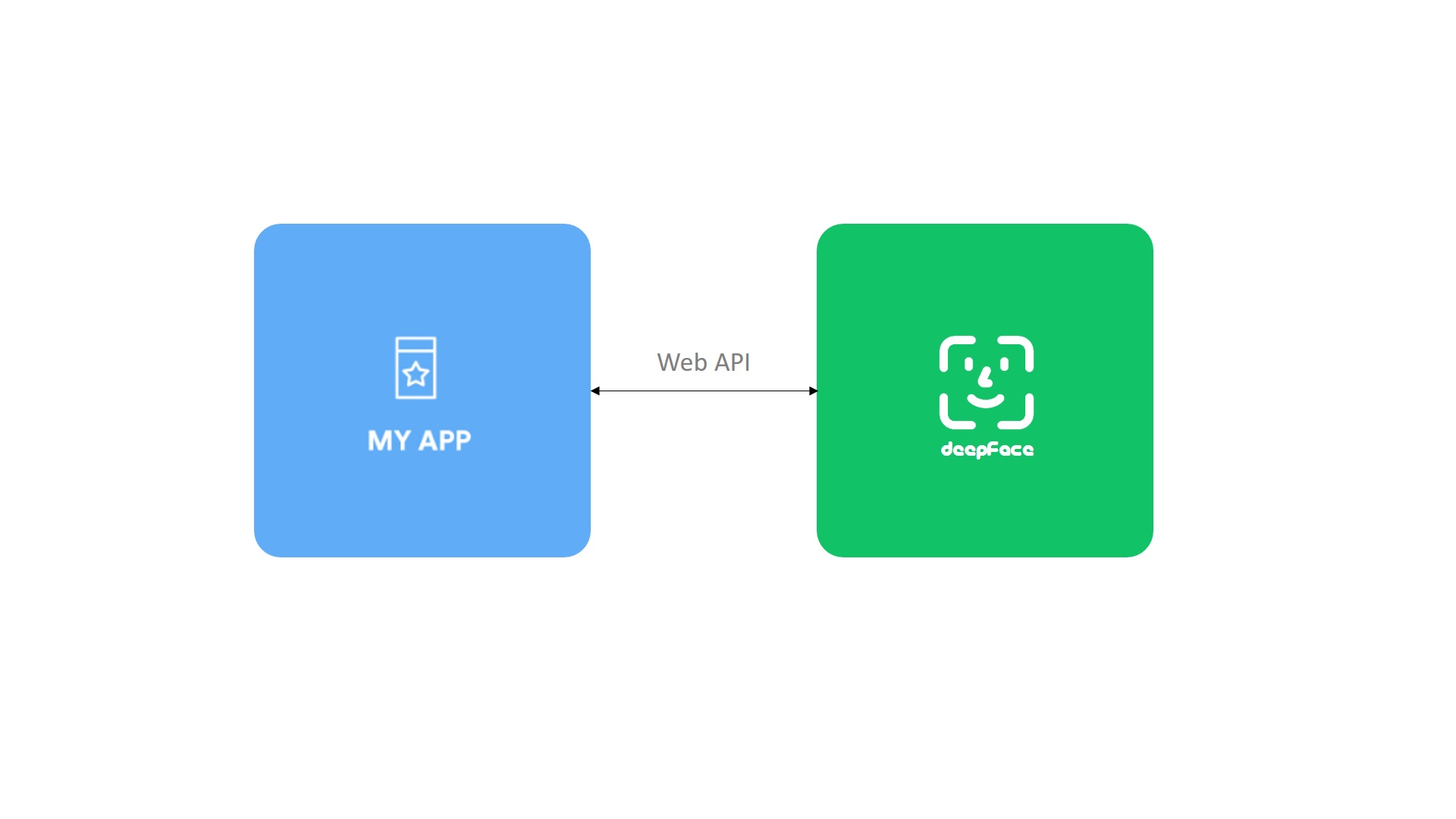

-**API** - [`Demo`](https://youtu.be/HeKCQ6U9XmI)

-

-DeepFace serves an API as well - see [`api folder`](https://github.com/serengil/deepface/tree/master/deepface/api/src) for more details. You can clone deepface source code and run the api with the following command. It will use gunicorn server to get a rest service up. In this way, you can call deepface from an external system such as mobile app or web.

-

-```shell

-cd scripts

-./service.sh

-```

-

-

-

-Face recognition, facial attribute analysis and vector representation functions are covered in the API. You are expected to call these functions as http post methods. Default service endpoints will be `http://localhost:5000/verify` for face recognition, `http://localhost:5000/analyze` for facial attribute analysis, and `http://localhost:5000/represent` for vector representation. You can pass input images as exact image paths on your environment, base64 encoded strings or images on web. [Here](https://github.com/serengil/deepface/tree/master/deepface/api/postman), you can find a postman project to find out how these methods should be called.

-

-**Dockerized Service**

-

-You can deploy the deepface api on a kubernetes cluster with docker. The following [shell script](https://github.com/serengil/deepface/blob/master/scripts/dockerize.sh) will serve deepface on `localhost:5000`. You need to re-configure the [Dockerfile](https://github.com/serengil/deepface/blob/master/Dockerfile) if you want to change the port. Then, even if you do not have a development environment, you will be able to consume deepface services such as verify and analyze. You can also access the inside of the docker image to run deepface related commands. Please follow the instructions in the [shell script](https://github.com/serengil/deepface/blob/master/scripts/dockerize.sh).

-

-```shell

-cd scripts

-./dockerize.sh

-```

-

-

-

-**Command Line Interface** - [`Demo`](https://youtu.be/PKKTAr3ts2s)

-

-DeepFace comes with a command line interface as well. You are able to access its functions in command line as shown below. The command deepface expects the function name as 1st argument and function arguments thereafter.

-

-```shell

-#face verification

-$ deepface verify -img1_path tests/dataset/img1.jpg -img2_path tests/dataset/img2.jpg

-

-#facial analysis

-$ deepface analyze -img_path tests/dataset/img1.jpg

-```

-

-You can also run these commands if you are running deepface with docker. Please follow the instructions in the [shell script](https://github.com/serengil/deepface/blob/master/scripts/dockerize.sh#L17).

-

-## FAQ and Troubleshooting

-

-If you believe you have identified a bug or encountered a limitation in DeepFace that is not covered in the [existing issues](https://github.com/serengil/deepface/issues) or [closed issues](https://github.com/serengil/deepface/issues?q=is%3Aissue+is%3Aclosed), kindly open a new issue. Ensure that your submission includes clear and detailed reproduction steps, such as your Python version, your DeepFace version (provided by `DeepFace.__version__`), versions of dependent packages (provided by pip freeze), specifics of any exception messages, details about how you are calling DeepFace, and the input image(s) you are using.

-

-Additionally, it is possible to encounter issues due to recently released dependencies, primarily Python itself or TensorFlow. It is recommended to synchronize your dependencies with the versions [specified in my environment](https://github.com/serengil/deepface/blob/master/requirements_local) and [same python version](https://github.com/serengil/deepface/blob/master/Dockerfile#L2) not to have potential compatibility issues.

-

-## Contribution

-

-Pull requests are more than welcome! If you are planning to contribute a large patch, please create an issue first to get any upfront questions or design decisions out of the way first.

-

-Before creating a PR, you should run the unit tests and linting locally by running `make test && make lint` command. Once a PR sent, GitHub test workflow will be run automatically and unit test and linting jobs will be available in [GitHub actions](https://github.com/serengil/deepface/actions) before approval.

-

-## Support

-

-There are many ways to support a project - starring⭐️ the GitHub repo is just one 🙏

-

-You can also support this work on [Patreon](https://www.patreon.com/serengil?repo=deepface) or [GitHub Sponsors](https://github.com/sponsors/serengil).

-

-

- -

-

-## Citation

-

-Please cite deepface in your publications if it helps your research - see [`CITATIONS`](https://github.com/serengil/deepface/blob/master/CITATION.md) for more details. Here are its BibTex entries:

-

-If you use deepface in your research for facial recogntion purposes, please cite this publication.

-

-```BibTeX

-@inproceedings{serengil2020lightface,

- title = {LightFace: A Hybrid Deep Face Recognition Framework},

- author = {Serengil, Sefik Ilkin and Ozpinar, Alper},

- booktitle = {2020 Innovations in Intelligent Systems and Applications Conference (ASYU)},

- pages = {23-27},

- year = {2020},

- doi = {10.1109/ASYU50717.2020.9259802},

- url = {https://doi.org/10.1109/ASYU50717.2020.9259802},

- organization = {IEEE}

-}

-```

-

-If you use deepface in your research for facial attribute analysis purposes such as age, gender, emotion or ethnicity prediction or face detection purposes, please cite this publication.

-

-```BibTeX

-@inproceedings{serengil2021lightface,

- title = {HyperExtended LightFace: A Facial Attribute Analysis Framework},

- author = {Serengil, Sefik Ilkin and Ozpinar, Alper},

- booktitle = {2021 International Conference on Engineering and Emerging Technologies (ICEET)},

- pages = {1-4},

- year = {2021},

- doi = {10.1109/ICEET53442.2021.9659697},

- url = {https://doi.org/10.1109/ICEET53442.2021.9659697},

- organization = {IEEE}

-}

-```

-

-Also, if you use deepface in your GitHub projects, please add `deepface` in the `requirements.txt`.

-

-## Licence

-

-DeepFace is licensed under the MIT License - see [`LICENSE`](https://github.com/serengil/deepface/blob/master/LICENSE) for more details.

-

-DeepFace wraps some external face recognition models: [VGG-Face](http://www.robots.ox.ac.uk/~vgg/software/vgg_face/), [Facenet](https://github.com/davidsandberg/facenet/blob/master/LICENSE.md), [OpenFace](https://github.com/iwantooxxoox/Keras-OpenFace/blob/master/LICENSE), [DeepFace](https://github.com/swghosh/DeepFace), [DeepID](https://github.com/Ruoyiran/DeepID/blob/master/LICENSE.md), [ArcFace](https://github.com/leondgarse/Keras_insightface/blob/master/LICENSE), [Dlib](https://github.com/davisking/dlib/blob/master/dlib/LICENSE.txt), [SFace](https://github.com/opencv/opencv_zoo/blob/master/models/face_recognition_sface/LICENSE) and [GhostFaceNet](https://github.com/HamadYA/GhostFaceNets/blob/main/LICENSE). Besides, age, gender and race / ethnicity models were trained on the backbone of VGG-Face with transfer learning. Licence types will be inherited if you are going to use those models. Please check the license types of those models for production purposes.

-

-DeepFace [logo](https://thenounproject.com/term/face-recognition/2965879/) is created by [Adrien Coquet](https://thenounproject.com/coquet_adrien/) and it is licensed under [Creative Commons: By Attribution 3.0 License](https://creativecommons.org/licenses/by/3.0/).

diff --git a/machine-learning-client/myenv/bin/deepface b/machine-learning-client/myenv/bin/deepface

deleted file mode 100755

index 02f40296..00000000

--- a/machine-learning-client/myenv/bin/deepface

+++ /dev/null

@@ -1,8 +0,0 @@

-#!/Users/e/Desktop/4-containerized-app-exercise-team-ejent/machine-learning-client/myenv/bin/python3

-# -*- coding: utf-8 -*-

-import re

-import sys

-from deepface.DeepFace import cli

-if __name__ == '__main__':

- sys.argv[0] = re.sub(r'(-script\.pyw|\.exe)?$', '', sys.argv[0])

- sys.exit(cli())

diff --git a/machine-learning-client/myenv/bin/flask b/machine-learning-client/myenv/bin/flask

deleted file mode 100755

index bdc56aac..00000000

--- a/machine-learning-client/myenv/bin/flask

+++ /dev/null

@@ -1,8 +0,0 @@

-#!/Users/e/Desktop/4-containerized-app-exercise-team-ejent/machine-learning-client/myenv/bin/python3

-# -*- coding: utf-8 -*-

-import re

-import sys

-from flask.cli import main

-if __name__ == '__main__':

- sys.argv[0] = re.sub(r'(-script\.pyw|\.exe)?$', '', sys.argv[0])

- sys.exit(main())

diff --git a/machine-learning-client/myenv/bin/gdown b/machine-learning-client/myenv/bin/gdown

deleted file mode 100755

index 7ab694fb..00000000

--- a/machine-learning-client/myenv/bin/gdown

+++ /dev/null

@@ -1,8 +0,0 @@

-#!/Users/e/Desktop/4-containerized-app-exercise-team-ejent/machine-learning-client/myenv/bin/python3

-# -*- coding: utf-8 -*-

-import re

-import sys

-from gdown.__main__ import main

-if __name__ == '__main__':

- sys.argv[0] = re.sub(r'(-script\.pyw|\.exe)?$', '', sys.argv[0])

- sys.exit(main())

diff --git a/machine-learning-client/myenv/bin/gunicorn b/machine-learning-client/myenv/bin/gunicorn

deleted file mode 100755

index c6363878..00000000

--- a/machine-learning-client/myenv/bin/gunicorn

+++ /dev/null

@@ -1,8 +0,0 @@

-#!/Users/e/Desktop/4-containerized-app-exercise-team-ejent/machine-learning-client/myenv/bin/python3

-# -*- coding: utf-8 -*-

-import re

-import sys

-from gunicorn.app.wsgiapp import run

-if __name__ == '__main__':

- sys.argv[0] = re.sub(r'(-script\.pyw|\.exe)?$', '', sys.argv[0])

- sys.exit(run())

diff --git a/machine-learning-client/myenv/package_info.json b/machine-learning-client/myenv/package_info.json

deleted file mode 100644

index 9eac2a23..00000000

--- a/machine-learning-client/myenv/package_info.json

+++ /dev/null

@@ -1,3 +0,0 @@

-{

- "version": "0.0.89"

-}

\ No newline at end of file

diff --git a/machine-learning-client/myenv/requirements.txt b/machine-learning-client/myenv/requirements.txt

deleted file mode 100644

index cf5ce5f9..00000000

--- a/machine-learning-client/myenv/requirements.txt

+++ /dev/null

@@ -1,13 +0,0 @@

-numpy>=1.14.0

-pandas>=0.23.4

-gdown>=3.10.1

-tqdm>=4.30.0

-Pillow>=5.2.0

-opencv-python>=4.5.5.64

-tensorflow>=1.9.0

-keras>=2.2.0

-Flask>=1.1.2

-mtcnn>=0.1.0

-retina-face>=0.0.1

-fire>=0.4.0

-gunicorn>=20.1.0

diff --git a/machine-learning-client/requirements.txt b/machine-learning-client/requirements.txt

deleted file mode 100644

index 3b481149..00000000

--- a/machine-learning-client/requirements.txt

+++ /dev/null

@@ -1,87 +0,0 @@

-absl-py==2.1.0

-astroid==3.1.0

-astunparse==1.6.3

-black==24.3.0

-certifi==2024.2.2

-charset-normalizer==3.3.2

-click==8.1.7

-contourpy==1.2.1

-cycler==0.12.1

-decorator==4.4.2

-dill==0.3.8

-dnspython==2.6.1

-exceptiongroup==1.2.0

-facenet-pytorch==2.5.3

-fer==22.5.1

-ffmpeg==1.4

-filelock==3.13.4

-flatbuffers==24.3.25

-fonttools==4.51.0

-fsspec==2024.3.1

-gast==0.5.4

-google-pasta==0.2.0

-grpcio==1.62.1

-h5py==3.11.0

-idna==3.6

-imageio==2.34.0

-imageio-ffmpeg==0.4.9

-importlib_metadata==7.1.0

-importlib_resources==6.4.0

-iniconfig==2.0.0

-isort==5.13.2

-Jinja2==3.1.3

-keras==3.2.0

-kiwisolver==1.4.5

-libclang==18.1.1

-Markdown==3.6

-markdown-it-py==3.0.0

-MarkupSafe==2.1.5

-matplotlib==3.8.4

-mccabe==0.7.0

-mdurl==0.1.2

-ml-dtypes==0.3.2

-moviepy==1.0.3

-mpmath==1.3.0

-mypy-extensions==1.0.0

-namex==0.0.7

-networkx==3.2.1

-numpy==1.26.4

-opencv-contrib-python==4.9.0.80

-opencv-python==4.9.0.80

-opt-einsum==3.3.0

-optree==0.11.0

-packaging==24.0

-pandas==2.2.2

-pathspec==0.12.1

-pillow==10.3.0

-platformdirs==4.2.0

-pluggy==1.4.0

-proglog==0.1.10

-protobuf==4.25.3

-Pygments==2.17.2

-pylint==3.1.0

-pymongo==4.6.3

-pyparsing==3.1.2

-pytest==8.1.1

-python-dateutil==2.9.0.post0

-pytz==2024.1

-requests==2.31.0

-rich==13.7.1

-six==1.16.0

-sympy==1.12

-tensorboard==2.16.2

-tensorboard-data-server==0.7.2

-tensorflow==2.16.1

-tensorflow-io-gcs-filesystem==0.36.0

-termcolor==2.4.0

-tomli==2.0.1

-tomlkit==0.12.4

-torch==2.2.2

-torchvision==0.17.2

-tqdm==4.66.2

-typing_extensions==4.11.0

-tzdata==2024.1

-urllib3==2.2.1

-Werkzeug==3.0.2

-wrapt==1.16.0

-zipp==3.18.1

diff --git a/machine-learning-client/test_client.py b/machine-learning-client/test_client.py

index 74b0c1d0..16a121a9 100644

--- a/machine-learning-client/test_client.py

+++ b/machine-learning-client/test_client.py

@@ -1,12 +1,56 @@

-from unittest.mock import patch, MagicMock

-import pytest, os

-from client import connect_db, get_emotion

+from unittest.mock import patch

+import mongomock

+import base64

+import io, cv2, os, pytest

+from client import connect_db, get_emotion, run_connection, DeepFace

from PIL import Image

+from pymongo import MongoClient

+import pytest

+import time

-os.environ["IMAGEIO_FFMPEG_EXE"] = "/usr/bin/ffmpeg"

def test_bad_connection():

"""Testing loop exit for connection method"""

- # pylint: disable=unused-variable

+ #pylint: disable=unused-variable

with patch("pymongo.MongoClient"):

connect_db(False)

+

+def test_connect_option_true():

+ """When the flag for running is true"""

+ with patch("client.connect_db") as mock_connection:

+ run_connection(True)

+ mock_connection.assert_called_once_with(True)

+

+

+def test_connect_option_false():

+ """run_connection method:false"""

+ with patch("client.connect_db") as mock_connection:

+ run_connection(False)

+ mock_connection.assert_called_once_with(False)

+

+

+def test_get_emotion_invalid_input():

+ with open("test1.png", "rb") as file:

+ image = file.read()

+ assert get_emotion("test")[:33] == "ERROR: cannot identify image file"

+ assert get_emotion(image)[:33] == "ERROR: cannot identify image file"

+

+def test_get_emotion_success():

+ """Testing connection"""

+ # pylint: disable=unused-variable

+ with patch("pymongo.MongoClient") as mock_client:

+ with patch("pymongo.collection.Collection") as mock_collection:

+ mock_collection.find_one.return_value = {

+ "_id": "",

+ "photo": base64.b64encode(cv2.imread("test1.png")).decode("utf-8"),

+ }

+ connect_db(False)

+

+def test_get_emotion_with_image():

+ """Testing get_emotion method"""

+ with open("./test0.png", "rb") as file:

+ image = file.read()

+ print(get_emotion(base64.b64encode(image).decode("utf-8")))

+

+

+

-

-

-## Citation

-

-Please cite deepface in your publications if it helps your research - see [`CITATIONS`](https://github.com/serengil/deepface/blob/master/CITATION.md) for more details. Here are its BibTex entries:

-

-If you use deepface in your research for facial recogntion purposes, please cite this publication.

-

-```BibTeX

-@inproceedings{serengil2020lightface,

- title = {LightFace: A Hybrid Deep Face Recognition Framework},

- author = {Serengil, Sefik Ilkin and Ozpinar, Alper},

- booktitle = {2020 Innovations in Intelligent Systems and Applications Conference (ASYU)},

- pages = {23-27},

- year = {2020},

- doi = {10.1109/ASYU50717.2020.9259802},

- url = {https://doi.org/10.1109/ASYU50717.2020.9259802},

- organization = {IEEE}

-}

-```

-

-If you use deepface in your research for facial attribute analysis purposes such as age, gender, emotion or ethnicity prediction or face detection purposes, please cite this publication.

-

-```BibTeX

-@inproceedings{serengil2021lightface,

- title = {HyperExtended LightFace: A Facial Attribute Analysis Framework},

- author = {Serengil, Sefik Ilkin and Ozpinar, Alper},

- booktitle = {2021 International Conference on Engineering and Emerging Technologies (ICEET)},

- pages = {1-4},

- year = {2021},

- doi = {10.1109/ICEET53442.2021.9659697},

- url = {https://doi.org/10.1109/ICEET53442.2021.9659697},

- organization = {IEEE}

-}

-```

-

-Also, if you use deepface in your GitHub projects, please add `deepface` in the `requirements.txt`.

-

-## Licence

-

-DeepFace is licensed under the MIT License - see [`LICENSE`](https://github.com/serengil/deepface/blob/master/LICENSE) for more details.

-

-DeepFace wraps some external face recognition models: [VGG-Face](http://www.robots.ox.ac.uk/~vgg/software/vgg_face/), [Facenet](https://github.com/davidsandberg/facenet/blob/master/LICENSE.md), [OpenFace](https://github.com/iwantooxxoox/Keras-OpenFace/blob/master/LICENSE), [DeepFace](https://github.com/swghosh/DeepFace), [DeepID](https://github.com/Ruoyiran/DeepID/blob/master/LICENSE.md), [ArcFace](https://github.com/leondgarse/Keras_insightface/blob/master/LICENSE), [Dlib](https://github.com/davisking/dlib/blob/master/dlib/LICENSE.txt), [SFace](https://github.com/opencv/opencv_zoo/blob/master/models/face_recognition_sface/LICENSE) and [GhostFaceNet](https://github.com/HamadYA/GhostFaceNets/blob/main/LICENSE). Besides, age, gender and race / ethnicity models were trained on the backbone of VGG-Face with transfer learning. Licence types will be inherited if you are going to use those models. Please check the license types of those models for production purposes.

-

-DeepFace [logo](https://thenounproject.com/term/face-recognition/2965879/) is created by [Adrien Coquet](https://thenounproject.com/coquet_adrien/) and it is licensed under [Creative Commons: By Attribution 3.0 License](https://creativecommons.org/licenses/by/3.0/).

diff --git a/machine-learning-client/myenv/bin/deepface b/machine-learning-client/myenv/bin/deepface

deleted file mode 100755

index 02f40296..00000000

--- a/machine-learning-client/myenv/bin/deepface

+++ /dev/null

@@ -1,8 +0,0 @@

-#!/Users/e/Desktop/4-containerized-app-exercise-team-ejent/machine-learning-client/myenv/bin/python3

-# -*- coding: utf-8 -*-

-import re

-import sys

-from deepface.DeepFace import cli

-if __name__ == '__main__':

- sys.argv[0] = re.sub(r'(-script\.pyw|\.exe)?$', '', sys.argv[0])

- sys.exit(cli())

diff --git a/machine-learning-client/myenv/bin/flask b/machine-learning-client/myenv/bin/flask

deleted file mode 100755

index bdc56aac..00000000

--- a/machine-learning-client/myenv/bin/flask

+++ /dev/null

@@ -1,8 +0,0 @@

-#!/Users/e/Desktop/4-containerized-app-exercise-team-ejent/machine-learning-client/myenv/bin/python3

-# -*- coding: utf-8 -*-

-import re

-import sys

-from flask.cli import main

-if __name__ == '__main__':

- sys.argv[0] = re.sub(r'(-script\.pyw|\.exe)?$', '', sys.argv[0])

- sys.exit(main())

diff --git a/machine-learning-client/myenv/bin/gdown b/machine-learning-client/myenv/bin/gdown

deleted file mode 100755

index 7ab694fb..00000000

--- a/machine-learning-client/myenv/bin/gdown

+++ /dev/null

@@ -1,8 +0,0 @@

-#!/Users/e/Desktop/4-containerized-app-exercise-team-ejent/machine-learning-client/myenv/bin/python3

-# -*- coding: utf-8 -*-

-import re

-import sys

-from gdown.__main__ import main

-if __name__ == '__main__':

- sys.argv[0] = re.sub(r'(-script\.pyw|\.exe)?$', '', sys.argv[0])

- sys.exit(main())

diff --git a/machine-learning-client/myenv/bin/gunicorn b/machine-learning-client/myenv/bin/gunicorn

deleted file mode 100755

index c6363878..00000000

--- a/machine-learning-client/myenv/bin/gunicorn

+++ /dev/null

@@ -1,8 +0,0 @@

-#!/Users/e/Desktop/4-containerized-app-exercise-team-ejent/machine-learning-client/myenv/bin/python3

-# -*- coding: utf-8 -*-

-import re

-import sys

-from gunicorn.app.wsgiapp import run

-if __name__ == '__main__':

- sys.argv[0] = re.sub(r'(-script\.pyw|\.exe)?$', '', sys.argv[0])

- sys.exit(run())

diff --git a/machine-learning-client/myenv/package_info.json b/machine-learning-client/myenv/package_info.json

deleted file mode 100644

index 9eac2a23..00000000

--- a/machine-learning-client/myenv/package_info.json

+++ /dev/null

@@ -1,3 +0,0 @@

-{

- "version": "0.0.89"

-}

\ No newline at end of file

diff --git a/machine-learning-client/myenv/requirements.txt b/machine-learning-client/myenv/requirements.txt

deleted file mode 100644

index cf5ce5f9..00000000

--- a/machine-learning-client/myenv/requirements.txt

+++ /dev/null

@@ -1,13 +0,0 @@

-numpy>=1.14.0

-pandas>=0.23.4

-gdown>=3.10.1

-tqdm>=4.30.0

-Pillow>=5.2.0

-opencv-python>=4.5.5.64

-tensorflow>=1.9.0

-keras>=2.2.0

-Flask>=1.1.2

-mtcnn>=0.1.0

-retina-face>=0.0.1

-fire>=0.4.0

-gunicorn>=20.1.0

diff --git a/machine-learning-client/requirements.txt b/machine-learning-client/requirements.txt

deleted file mode 100644

index 3b481149..00000000

--- a/machine-learning-client/requirements.txt

+++ /dev/null

@@ -1,87 +0,0 @@

-absl-py==2.1.0

-astroid==3.1.0

-astunparse==1.6.3

-black==24.3.0

-certifi==2024.2.2

-charset-normalizer==3.3.2

-click==8.1.7

-contourpy==1.2.1

-cycler==0.12.1

-decorator==4.4.2

-dill==0.3.8

-dnspython==2.6.1

-exceptiongroup==1.2.0

-facenet-pytorch==2.5.3

-fer==22.5.1

-ffmpeg==1.4

-filelock==3.13.4

-flatbuffers==24.3.25

-fonttools==4.51.0

-fsspec==2024.3.1

-gast==0.5.4

-google-pasta==0.2.0

-grpcio==1.62.1

-h5py==3.11.0

-idna==3.6

-imageio==2.34.0

-imageio-ffmpeg==0.4.9

-importlib_metadata==7.1.0

-importlib_resources==6.4.0

-iniconfig==2.0.0

-isort==5.13.2

-Jinja2==3.1.3

-keras==3.2.0

-kiwisolver==1.4.5

-libclang==18.1.1

-Markdown==3.6

-markdown-it-py==3.0.0

-MarkupSafe==2.1.5

-matplotlib==3.8.4

-mccabe==0.7.0

-mdurl==0.1.2

-ml-dtypes==0.3.2

-moviepy==1.0.3

-mpmath==1.3.0

-mypy-extensions==1.0.0

-namex==0.0.7

-networkx==3.2.1

-numpy==1.26.4

-opencv-contrib-python==4.9.0.80

-opencv-python==4.9.0.80

-opt-einsum==3.3.0

-optree==0.11.0

-packaging==24.0

-pandas==2.2.2

-pathspec==0.12.1

-pillow==10.3.0

-platformdirs==4.2.0

-pluggy==1.4.0

-proglog==0.1.10

-protobuf==4.25.3

-Pygments==2.17.2

-pylint==3.1.0

-pymongo==4.6.3

-pyparsing==3.1.2

-pytest==8.1.1

-python-dateutil==2.9.0.post0

-pytz==2024.1

-requests==2.31.0

-rich==13.7.1

-six==1.16.0

-sympy==1.12

-tensorboard==2.16.2

-tensorboard-data-server==0.7.2

-tensorflow==2.16.1

-tensorflow-io-gcs-filesystem==0.36.0

-termcolor==2.4.0

-tomli==2.0.1

-tomlkit==0.12.4

-torch==2.2.2

-torchvision==0.17.2

-tqdm==4.66.2

-typing_extensions==4.11.0

-tzdata==2024.1

-urllib3==2.2.1

-Werkzeug==3.0.2

-wrapt==1.16.0

-zipp==3.18.1

diff --git a/machine-learning-client/test_client.py b/machine-learning-client/test_client.py

index 74b0c1d0..16a121a9 100644

--- a/machine-learning-client/test_client.py

+++ b/machine-learning-client/test_client.py

@@ -1,12 +1,56 @@

-from unittest.mock import patch, MagicMock

-import pytest, os

-from client import connect_db, get_emotion

+from unittest.mock import patch

+import mongomock

+import base64

+import io, cv2, os, pytest

+from client import connect_db, get_emotion, run_connection, DeepFace

from PIL import Image

+from pymongo import MongoClient

+import pytest

+import time

-os.environ["IMAGEIO_FFMPEG_EXE"] = "/usr/bin/ffmpeg"

def test_bad_connection():

"""Testing loop exit for connection method"""

- # pylint: disable=unused-variable

+ #pylint: disable=unused-variable

with patch("pymongo.MongoClient"):

connect_db(False)

+

+def test_connect_option_true():

+ """When the flag for running is true"""

+ with patch("client.connect_db") as mock_connection:

+ run_connection(True)

+ mock_connection.assert_called_once_with(True)

+

+

+def test_connect_option_false():

+ """run_connection method:false"""

+ with patch("client.connect_db") as mock_connection:

+ run_connection(False)

+ mock_connection.assert_called_once_with(False)

+

+

+def test_get_emotion_invalid_input():

+ with open("test1.png", "rb") as file:

+ image = file.read()

+ assert get_emotion("test")[:33] == "ERROR: cannot identify image file"

+ assert get_emotion(image)[:33] == "ERROR: cannot identify image file"

+

+def test_get_emotion_success():

+ """Testing connection"""

+ # pylint: disable=unused-variable

+ with patch("pymongo.MongoClient") as mock_client:

+ with patch("pymongo.collection.Collection") as mock_collection:

+ mock_collection.find_one.return_value = {

+ "_id": "",

+ "photo": base64.b64encode(cv2.imread("test1.png")).decode("utf-8"),

+ }

+ connect_db(False)

+

+def test_get_emotion_with_image():

+ """Testing get_emotion method"""

+ with open("./test0.png", "rb") as file:

+ image = file.read()

+ print(get_emotion(base64.b64encode(image).decode("utf-8")))

+

+

+

![]()

![]()

![]()

![]()

![]()

![]()

![]()

![]()

![]() -

-![]()

![]()

![]()