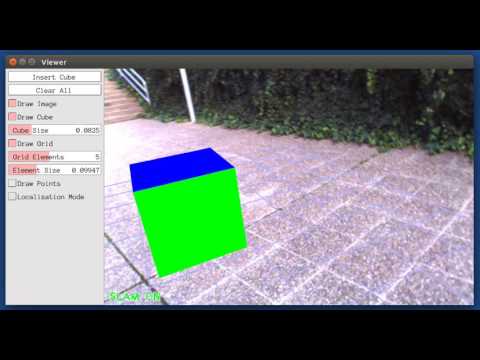

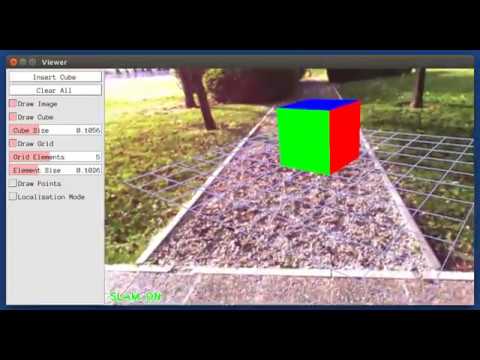

SIVO is a novel feature selection method for visual SLAM which facilitates long-term localization. This algorithm enhances traditional feature detectors with deep learning based scene understanding using a Bayesian neural network, which provides context for visual SLAM while accounting for neural network uncertainty.

Our method selects features which provide the highest reduction in Shannon entropy between the entropy of the current state, and the joint entropy of the state given the addition of a new feature with the classification entropy of the feature from the Bayesian NN. This strategy generates a sparse map suitable for long-term localization, as each selected feature significantly reduces the uncertainty of the vehicle state and has been detected to be a static object (building, traffic sign, etc.) repeatedly with a high confidence.

The paper can be found here. If you use this code, please cite the paper:

@article{ganti2018visual,

title={Visual SLAM with Network Uncertainty Informed Feature Selection},

author={Ganti, Pranav and Waslander, Steven L.},

journal={arXiv preprint arXiv:1811.11946},

year={2018}

}

If you'd like to deep dive further into the theory, background, or methodology, please refer to my thesis. If you use refer to this document in your work, please cite it:

@mastersthesis{ganti2018SIVO,

author={{Ganti, Pranav}},

title={SIVO: Semantically Informed Visual Odometry and Mapping},

year={2018},

publisher="UWSpace",

url={http://hdl.handle.net/10012/14111}

}

This method builds on the work of Bayesian SegNet and ORB_SLAM2. Detailed background information can be found below.

This implementation has been tested with Ubuntu 16.04.

A powerful CPU (e.g. Intel i7), and a powerful GPU (e.g. NVIDIA TitanX) are required to provide more stable and accurate results. Due to the technique of approximating a Bayesian Neural Network by passing an image through the network several times, this network does not quite run in real time.

The thread and chrono functionalities of C++11 are required

A modified version of Caffe is required to use Bayesian SegNet. Please see the caffe-segnet-cudnn7 submodule within this repository, and follow the installation instructions.

If you wish to test or train weights for the Bayesian SegNet architecture, please see our modified SegNet repository for information and a tutorial.

Pangolin is used for visualization and user interface. Download and install instructions can be found here.

OpenCV is used to manipulate images and features. Download and install instructions can be found here. Required version > OpenCV 3.2.

Eigen3 is used for linear algebra, specifically matrix and tensor manipulation. Required by g2o and Bayesian SegNet. Download and install instructions can be found at: http://eigen.tuxfamily.org. Required version > 3.2.0 (for Tensors).

We use modified versions of the DBoW2 library to perform place recognition.

We use a modified version of the g2o library to perform non-linear optimization for the SLAM backend. The original repository can be found here.

Clone the repository:

git clone --recursive https://github.com/navganti/SIVO.git

or

git clone --recursive [email protected]:navganti/SIVO.git

Ensure you use the recursive flag to initialize the submodule.

The network weights (*.caffemodel) are stored using Git LFS. If you already have this installed, the git clone command above should download all necessary files. If not, perform the following.

- Install Git LFS. The steps can be found here.

- Run the command:

git lfs install - Navigate to the location of the SIVO home folder.

- Run the command:

git lfs pull. This should download the weights and place them in the appropriate subfolders withinconfig/bayesian_segnet/.

There are 2 separate weights files. There are files for both Standard and Basic Bayesian SegNet trained on the KITTI Semantic Dataset; these weights were first trained using the Cityscapes Dataset, and were then fine tuned. All weights have the batch normalization layer merged with the preceding convolutional layer in order to speed up inference.

- Build

caffe-segnet-cudnn7. Navigate todependencies/caffe-segnet-cudnn7, and follow the instructions listed in the README, specifically the CMake installation steps. This process is a little involved - please follow the instructions carefully. - Ensure all other prerequisites are installed.

- We provide a script,

build.sh, to build DBoW2, g2o, as well as the SIVO repository. Within the main repository folder, run:

chmod +x build.sh

./build.sh

This will create liborbslam_so, libbayesian_segnet.so, and libsivo_helpers.so in the lib folder and the executable SIVO in the bin folder.

The program can be run with the following:

./bin/SIVO config/Vocabulary/ORBvoc.txt config/CONFIGURATION_FILE config/bayesian_segnet/PATH_TO_PROTOTXT config/bayesian_segnet/PATH_TO_CAFFEMODEL PATH_TO_DATASET_FOLDER/dataset/sequences/SEQUENCE_NUMBERThe parameters CONFIGURATION_FILE, PATH_TO_PROTOTXT, PATH_TO_CAFFEMODEL, and PATH_TO_DATASET_FOLDER must be modified.

To use SIVO with the KITTI dataset, perform the following

-

Download the dataset (colour images) from here.

-

Modify CONFIGURATION_FILE to be

/kitti/KITTIX.yaml, whereKITTIX.yamlis one ofKITTI00-02.yaml,KITTI03.yaml, orKITTI04-12.yamlfor sequence 0 to 2, 3, and 4 to 12 respectively. Parameters can be modified within these files (such as entropy threshold, number of base ORB features, etc.) -

Change

PATH_TO_PROTOTXTto the full path to the desired Bayesian SegNet .prototxt file (e.g. basic/kitti/bayesian_segnet_basic_kitti.prototxt). Modify theinput_dimvalue to be a batch size which fits on your GPU. Keep the image size in mind - for KITTI, we are resizing the images to be 352 x 1024 such that they work with the network dimensions. This resizing happens within thesrc/orbslam/System.ccfile, and the resizing dimensions are taken from the.prototxtfile. -

Change

PATH_TO_CAFFEMODELto the full path to the desired Bayesian SegNet .caffemodel file (e.g. basic/kitti/bayesian_segnet_basic_kitti.caffemodel). -

Change

PATH_TO_DATASET_FOLDERto the full path to the downloaded dataset folder. ChangeSEQUENCE_NUMBERto 00, 01, 02,.., 10. -

Launch the program using the above command.

You can change between the SLAM and Localization modes using the GUI of the map viewer.

SLAM Mode: This is the default mode. The system runs three threads in parallel : Tracking, Local Mapping and Loop Closing. The system localizes the camera, builds new map and tries to close loops.

Localization Mode: This mode can be used when you have a good map of your working area. In this mode the Local Mapping and Loop Closing are deactivated. The system localizes the camera in the map (which is no longer updated), using relocalization if needed.

SIVO is a modification of ORB_SLAM2, which is released under the GPLv3 license. Therefore, this code is also released under GPLv3. The original ORB_SLAM2 license can be found here.

For a detailed list of all code/library dependencies and associated licenses, please see Dependencies.md.

This work uses the Bayesian SegNet architecture for semantic segmentation, created by Alex Kendall, Vijay Badrinarayanan, and Roberto Cipolla.

Bayesian SegNet is an extension of SegNet, a deep convolutional encoder-decoder architecture for image segmentation. This network extends SegNet by incorporating model uncertainty, implemented through dropout layers.

For more information about the SegNet architecture:

Alex Kendall, Vijay Badrinarayanan and Roberto Cipolla "Bayesian SegNet: Model Uncertainty in Deep Convolutional Encoder-Decoder Architectures for Scene Understanding." arXiv preprint arXiv:1511.02680, 2015. PDF.

Vijay Badrinarayanan, Alex Kendall and Roberto Cipolla "SegNet: A Deep Convolutional Encoder-Decoder Architecture for Image Segmentation." PAMI, 2017. PDF.

SIVO's localization functionality builds upon ORB_SLAM2. ORB_SLAM2 is a real-time SLAM library for Monocular, Stereo and RGB-D cameras that computes the camera trajectory and a sparse 3D reconstruction (in the stereo and RGB-D case with true scale). It is able to detect loops and relocalize the camera in real time. We provide examples to run the SLAM system in the KITTI dataset as stereo or monocular, in the TUM dataset as RGB-D or monocular, and in the EuRoC dataset as stereo or monocular. ORB_SLAM2 also contains a ROS node to process live monocular, stereo or RGB-D streams. The library can be compiled without ROS. ORB-SLAM2 provides a GUI to change between a SLAM Mode and Localization Mode, see here.

[Monocular] Raúl Mur-Artal, J. M. M. Montiel and Juan D. Tardós. ORB-SLAM: A Versatile and Accurate Monocular SLAM System. IEEE Transactions on Robotics, vol. 31, no. 5, pp. 1147-1163, 2015. (2015 IEEE Transactions on Robotics Best Paper Award). PDF.

[Stereo and RGB-D] Raúl Mur-Artal and Juan D. Tardós. ORB-SLAM2: an Open-Source SLAM System for Monocular, Stereo and RGB-D Cameras. IEEE Transactions on Robotics, vol. 33, no. 5, pp. 1255-1262, 2017. PDF.

[DBoW2 Place Recognizer] Dorian Gálvez-López and Juan D. Tardós. Bags of Binary Words for Fast Place Recognition in Image Sequences. IEEE Transactions on Robotics, vol. 28, no. 5, pp. 1188-1197, 2012. PDF

If you use ORB_SLAM2 (Monocular) in an academic work, please cite:

@article{murTRO2015,

title={{ORB-SLAM}: a Versatile and Accurate Monocular {SLAM} System},

author={Mur-Artal, Ra\'ul, Montiel, J. M. M. and Tard\'os, Juan D.},

journal={IEEE Transactions on Robotics},

volume={31},

number={5},

pages={1147--1163},

doi = {10.1109/TRO.2015.2463671},

year={2015}

}

if you use ORB_SLAM2 (Stereo or RGB-D) in an academic work, please cite:

@article{murORB2,

title={{ORB-SLAM2}: an Open-Source {SLAM} System for Monocular, Stereo and {RGB-D} Cameras},

author={Mur-Artal, Ra\'ul and Tard\'os, Juan D.},

journal={IEEE Transactions on Robotics},

volume={33},

number={5},

pages={1255--1262},

doi = {10.1109/TRO.2017.2705103},

year={2017}

}